From elements on your landing pages, to your Email marketing and your other online (and offline) marketing efforts, there is always room for improvement, which means that there are always elements that can be tested to improve your eventual sales performance.

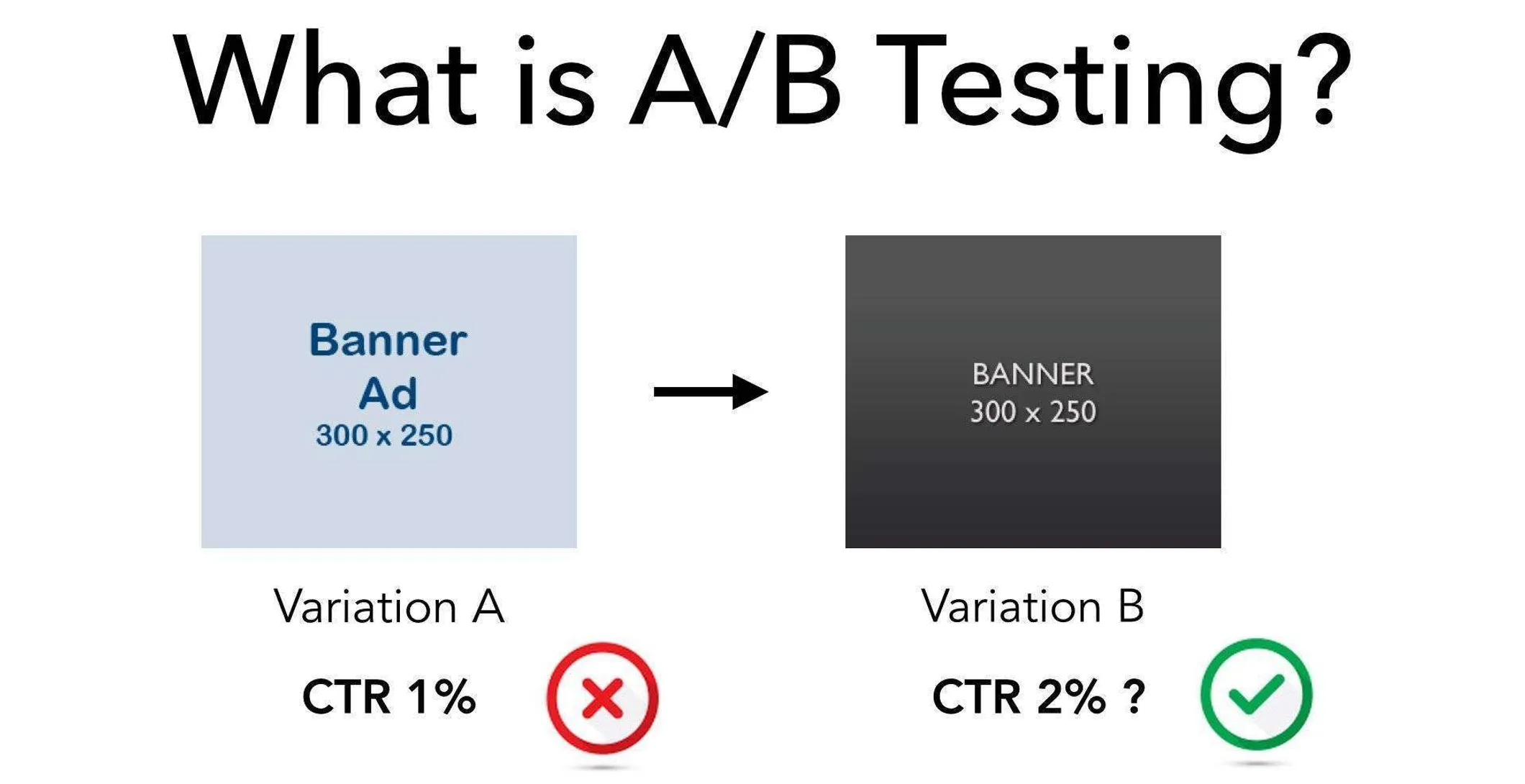

In this article we are going to take a more in depth look at A/B testing and the importance of A/B testing in your analytics.

Why analytics is essential to A/B Testing

Unfortunately, there is more to A/B Testing than just trying out different colour buttons and waiting to see which one gets the most clicks.

Any time we must measure and compare collections of things, we need to use statistics.

When you use proper analytics the statistics you draw will provide you with inference on your results, which can help you to make mindful business decisions.

What we are trying to find when run an A/B test is the mean or the average result of our sample. This is the result we see most frequently and can expect to see most frequently in the future. Finding the mean helps us to minimise our risk by utilizing the variable most likely to succeed.

The next thing we look for is the variance. The Variance is the average variability of the data, or the range within which mean falls, from one extreme to another.

The smaller the variance is, the more precise your results will be.

But we’re getting ahead of ourselves. Before we can do any of these things we must lay out the parameters of the test.

Sample Size and Duration

One of these parameters is the sample size of your test group.

You must predetermine the sample size of your test before you start. If you don’t you could easily keep running the test forever! Or you might not test enough people to get a true result.

Many A/B Testing apps don’t wait for a fixed horizon before giving statistics. Your test can and will go through dips in relevance known as statistical significance and statistical insignificance. You need to allow enough time to be able to read the overall mean through a full variance of results, and you need to read it with enough of the dips and peaks (variance again) to get a true reading. The only way to do that? Time.

One of the biggest (and most common) mistakes many new marketers make is to call the statistics in too soon. You can go hugely off course if you base your decisions on just a peak or a dip, rather than wait it out a bit to find the mean.

P-Value

If you have been reading up on statistical significance you have probably come across the term “P-Value”. This is measure of evidence against a null hypothesis. Or to put it simply: the control in the test group.

It is the probability you have of seeing a particular result, when you assume that the null hypothesis is correct (in other words you bet against your own educated guess).

The reason P-value exists is because most of the time we come into a test with a preconception of what our findings will be, but we want to be sure of them before applying them to our marketing.

It allows us to see how far off of the mark (or how near) we are. This may seem like a waste of time, but actually, it isn’t.

By setting a P-value based on our anticipated findings we can see of we are on the right track in our thinking around the variables.

If the results are near to P-value then we know we are on the right track. If they are miles off it may indicate that a new approach is required.

Statistical Power

Statistical Power is the term that refers to anticipating an effect which is then proven to actually be there. Statistical significance is the probability of an effect where none exists.

If you low statistical power you are less likely to be perusing the most advantageous action in your testing.

When you have high statistical power it signifies that you are moving in a positive direction.

Regressing to the Mean

You may notice that most tests will have wild fluctuations in results at the start of a test, which will slowly but surely, over time, stay closer to the average. This is called regression to the mean.

Wikipedia gives us a good example:

“Imagine you give a class of students a 100-item true/false test on a subject. Suppose all the students choose their all their answers randomly. Then, each student’s score would be a realization of independent and identically distributed random variables, with an expected mean of 50. Of course, some students would score much above 50 and some much below.

So say you take only the top 10% of students and give them a second test where they, again, guess randomly on all questions. Since the mean would still be expected to be near 50, it’s expected that the students’ scores would regress to the mean – their scores would go down and be closer to the mean.”

In other words, it’s essential to give your test enough time to mature so that you can draw true conclusions. If you pull your results in too early you are likely to read false positives or other inaccurate indications.

Another reason to run linger tests is because any test that has to do with the internet is also subject to the novelty effect. The novelty effect states that anything new, or novel, online will receive a lot of attention at first, and then the novelty will wear off. Only after the novelty has warn off can you determine whether a tested aspect is actually inferior, superior, or a mere novelty.

In Closing

The golden rules of A/B testing are:

Test for at least two full business cycles

Make sure your sample size is large enough (test enough different people)

Remember to factor in confounding variables and external factors such as holidays, special days and other short-lived influencers

Set a fixed horizon (end date) and sample size for your test before you run it, and don’t stop the test until you reach them

Don’t peak! Wait until the test ends to draw results. If there hasn’t yet been regression to the mean then you need to test longer or a larger sample, or both